Why Is SEO Audit Important?

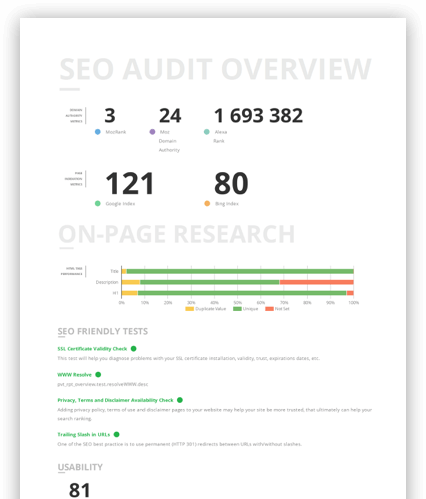

An in-depth SEO audit will give you insight backed by raw data about how your website is optimised to online user search.

By taking a deep dive into the inner workings of your website, our report offers a look into its overall SEO quality.

With this, you’ll be able to:

- Develop an informed approach to SEO

- Identify and circumvent any loopholes that may be affecting your SEO performance

Contact Malaysia’s leading digital marketing agency for a full SEO Audit and fine-tune your digital strategy today!

What Does an SEO Audit Checklist Entail?

Primal’s SEO audit checklist is a step-by-step guide that determines the current performance of your businesses’ website. Follow these steps and you’ll have a great understanding of what you’re doing right and where you need to improve:

1. Check Robots.txt and XML sitemap

When it comes to improving your SERP rankings, there are two files that every business has to feature within their website’s code: robots.txt and XML sitemap. The first is placed within your website’s root directory and informs search engine bots about the pages you want to be crawled, while blocking access to others. One important aspect of robots.txt is that it should reference the location of your XML sitemap.

The XML sitemap is another essential file as it contains a complete list of pages on your website that you want bots to access. Here, you can include extra details about each page like thumbnail descriptions or metadata, which helps search engines determine the importance of each page. With these three steps, you can use robots.txt and XML sitemap together to index your entire website and enhance your rankings:

1) Locate Your Sitemap URL

By adding /sitemap.xml to the end of your website’s domain, you can check to see if it exists. For example, type www.example.com/sitemap.xml into your web browser.

This screenshot shows how primal.co.th has multiple sitemaps listed. This technical method can significantly improve how search engines index your website and often leads to a boost in traffic.

You can read about the

advantages of having multiple sitemaps

over at moz.com. If you discover you don’t have a sitemap on your website, you can create one using an

XML sitemap generator

or by using the information available at

sitemaps.org

.

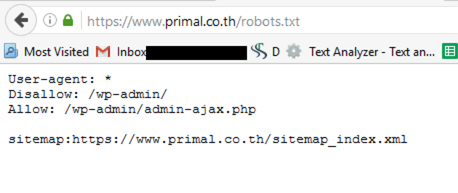

2) Locate Your Robots.txt file

You can also check to see if your robots.txt file exists by adding /robots.txt to the end of your domain. Type www.example.com/robots.txt into your browser.

If you do already have a robots.txt file, you should check if its syntax (i.e. file name phrasing and spelling) has been completed properly. However, if your robots.txt file doesn’t exist, you’ll have to create one and place it within the root directory of your website’s server. This can usually be found in the website’s main “index.html”, although this location can change across different types of servers.

3) Add the sitemap location to Robots.txt (if it’s not there already)

You need to ensure your robots.txt file has a directive that automatically discovers your XML sitemap. Open up the robots.txt file and complete these steps:

Sitemap: www.example.com/sitemap.xml

The robots.txt file will be:

Sitemap: www.example.com/sitemap.xml

User-agent:*

Disallow: /wp-admin/

Allow: /wp-admin/admin-example.php

The above screenshot taken from primal.co.th shows how www.example.com/robots.txt should look when your XML sitemap has been set for auto-discovery.

2. Check Protocol and Duplicate Versions

Now we’ve completed verifying these important files, the next step in Primal’s SEO check is to assess the following pages and confirm they are either accessible or redirecting traffic to your website.

- https://example.com

- https://example.com/index.php

- https://www.example.com

- https://www.example.com/index.php

Google also greatly prefers sites that use the enhanced security of HTTPS (Secure HyperText Transfer Protocol) compared to standard HTTP (HyperText Transfer Protocol). For e-commerce brands taking customer bank account numbers and credit card information, having this added layer of security is essential.

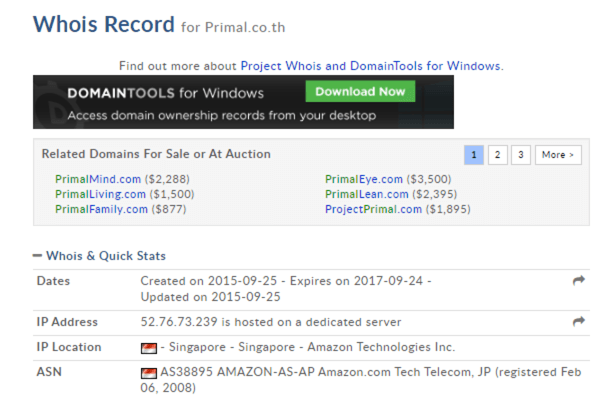

3. Check the Domain Age

Check domain SEO to determine the age of your pages and see if that’s impacting your SERP rankings. Pages that have gone a long time without updates are at risk of slipping from visibility in relevant search results.

Try using

whois.domaintools.com

and discover:

- The age of your website

- The status of your backlink profile – older domains tend to have larger backlinks profiles

4. Check the Page Speed

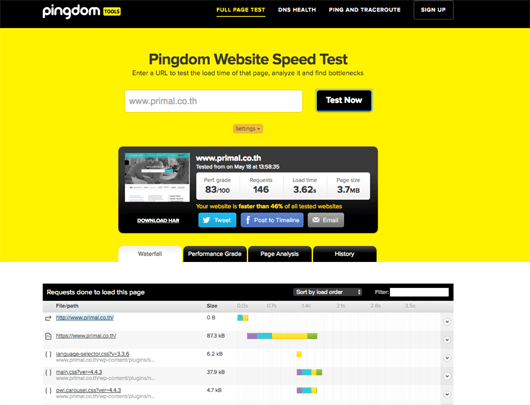

According to moz.com, a website’s speed is one of the leading factors Google uses to rank pages. With research telling us customers are far more likely to browse websites with quickly loading pages, your own digital presence must be rapid-fire. You can check the speed of your website using

tools.pingdom.com. It’s a useful service that provides detailed information on both your loading times and performance against other websites.

Many elements contribute to a fast website. But you can start by compressing images, streamlining your content delivery network and optimising server response times. Don’t forget to complete this step for both your mobile and desktop websites.

5. Check URL healthiness

There are numerous ways to assess the health of your URLs. Start by checking these:

Page title

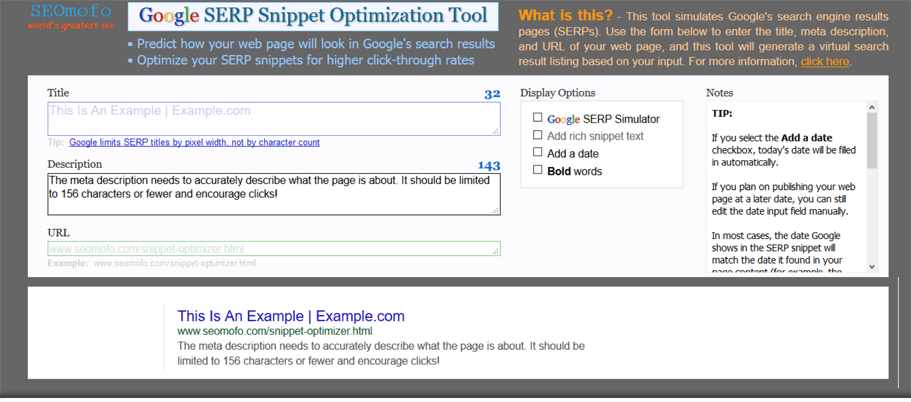

Page titles, also known as title tags, are essential to optimising your website content. Using less than 70 characters with a short and sharp approach, page titles should always accurately convey what the page is about. As they appear on the SERP and the browser tab, search engines use page titles to determine a page’s subject and how it should appear within relevant queries. Page titles can also be optimised with relevant keywords.

Meta description

Meta descriptions might not directly contribute to the optimisation of your website, but as they appear in the SERP, they can greatly influence whether someone clicks on your page. As meta descriptions provide additional details that can lure in visitors, creating unique copy that accurately outlines what appears on the page is a must. You can utilise a

snippet optimizer

tool to generate meta descriptions in the ideal length of 156 characters or less. Meanwhile, this tool can also be used to generate page titles.

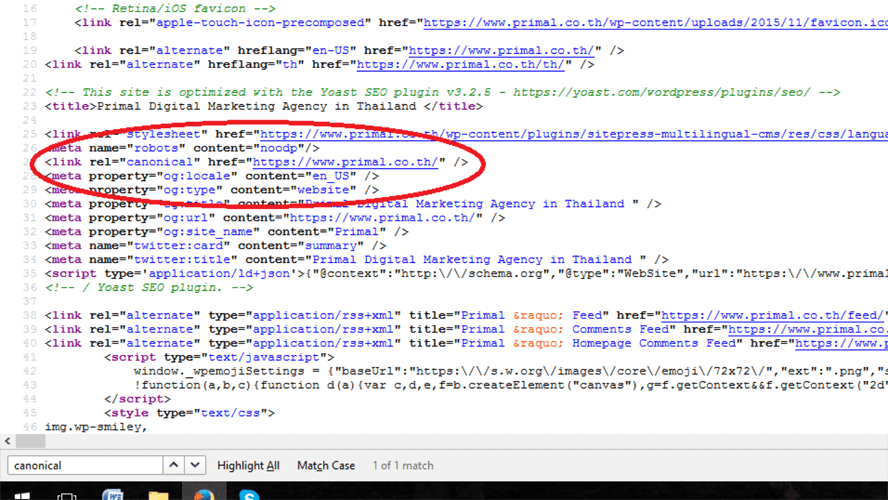

Canonicalization

Canonicalization is how you can ensure your website doesn’t feature multiple versions of the same page. This is an important step because search engines can have a hard time determining what to display to users if there are several instances of the same thing. In addition, having duplicate versions of the same page can confuse visitors, which negatively impacts customer satisfaction and overall SERP rankings.

If you discover multiple iterations of a single page, it’s important to redirect them all to a primary version. This can be achieved through 301 redirects or canonical tags. The latter means identifying the duplicate page in your HTML header and listing a replacement URL for bots to read instead.

For example:

Found within the HTML header of the page loading on this URL https://primal.co.th/index.php, there would be a parameter like this:

Headings (H1, H2, etc…)

Our SEO check will always assess page headings and ensure they feature high-quality keywords, although it’s important not to go overboard with optimisation. By avoiding the same keyword in multiple headings on the same page and keeping them relevant to the content, you can avoid duplication issues and increase your rankings.

Index, noindex, follow, nofollow, etc…

It’s important to make use of the following meta tags. These inform search engines about whether a specific page should be indexed and if links should be followed:

Index - tells the search engine to index a specific page

Noindex - tells the search engine not to index a specific page

Follow - tells the search engine to follow the links on a specific page

Nofollow - tells the search engine not to follow the links on a specific page

Response codes – 200, 301, 404 etc.

Understanding HTTP status codes is another vital step for business owners. These reveal how your website is communicating with the server and diagnose potential issues that could be limiting how people interact with your business. Here are some common HTTP status codes that you might encounter:

200: Everything is okay.

301: Permanent redirect; everyone is redirected to the new location.

302: Temporary redirect; everyone is redirected to the new location, except for any ‘link juice’.

404: Page not found; the original page is gone and site visitors may see a 404 error page.

500: Server error; no page is returned and both site visitors and the search engine bots can’t find it.

503: A 404 alternative; this response code essentially asks everyone to ‘come back later’.

Visit

moz.com

for more information on the importance of response codes.

Word counts/thin content

Since the release of Google’s Panda algorithm update, thin content has performed poorly. This is the kind of content that’s short, generic and doesn’t offer customers any genuinely valuable information. Nowadays, businesses experience much better SERP rankings if they focus their attention on producing content that’s informative, engaging and at least 300 to 500 words long.

The creation of educational and rewarding content is key to SEO success.

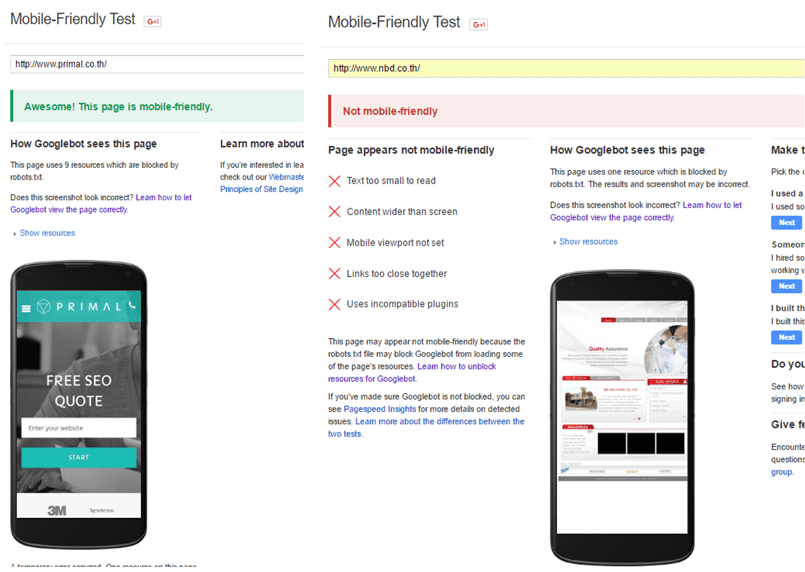

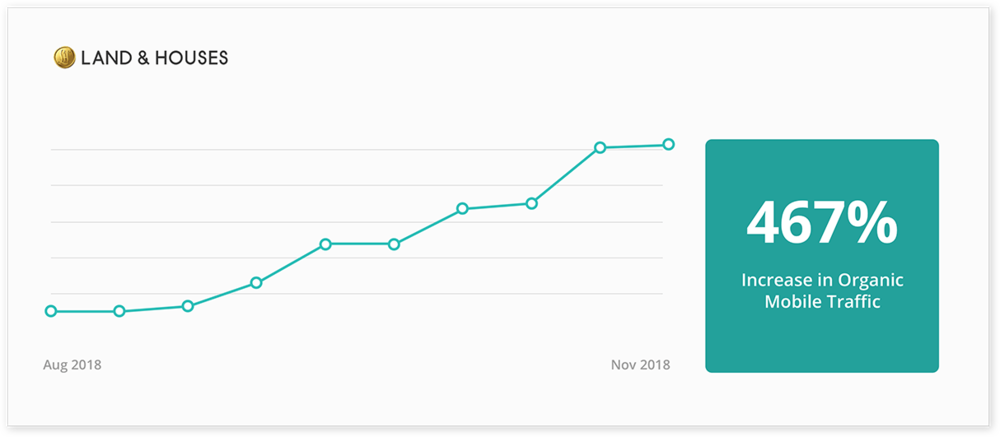

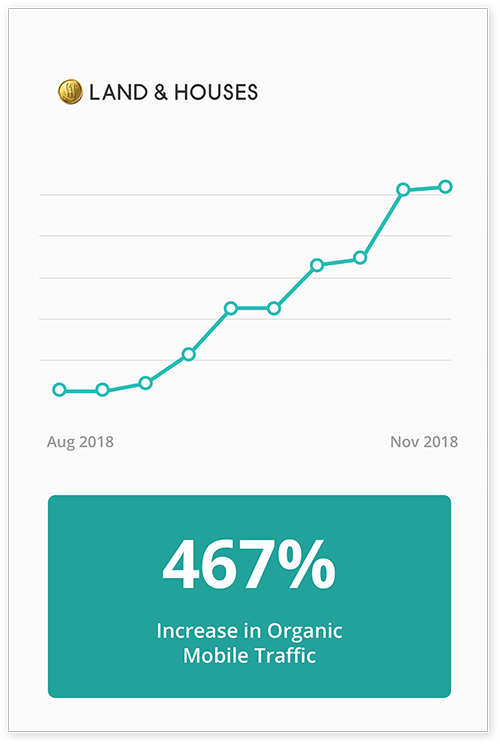

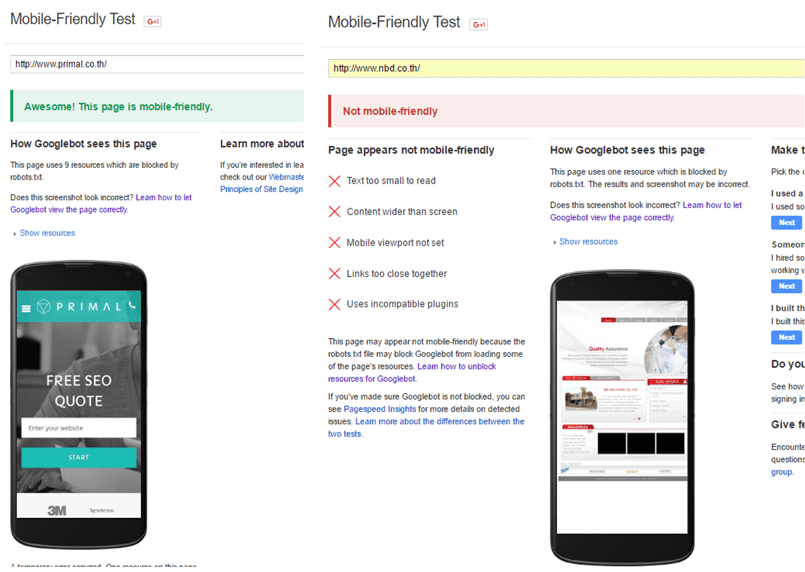

6. Check the Site’s Mobile Friendliness

According to internet monitoring firm

StatCounter

, mobile internet access surpassed desktop in 2016. With this figure only growing since then, having a mobile-first website design is essential to meeting your customers’ needs. Google has also recognised this seismic shift, using what they call

mobile-first indexing

to give preference to websites with a strong mobile presence. Considering these factors, businesses should use Google’s own

Mobile Friendliness Tool

to determine how your website measures up in the smartphone space.

How a mobile-friendly website looks compared to an outdated one.

7. Check Backlink Profiles

Building a backlink network can have a hugely positive impact on the success of your SEO campaign. When other websites across the internet link to your business, Google sees this as meaning your content has something valuable to offer users. By gaining more backlinks, your website is generally more likely to rank higher.

There are some important things to avoid when it comes to backlinks. Google can penalise websites for having too many ‘dofollow’ links coming from a single source, while backlinks from low-quality websites are also seen as a negative. Ultimately, this means it's better to achieve high-quality links over sheer numbers. By including unnatural links, your website runs the risk of receiving a Google penalty and being deindexed – a potentially devastating outcome for online businesses.

However, the

Google Search Console

provides a route to disavowing unwanted backlinks. You have to create a backlink report of all the links leading to your website before uploading a document to Google that details the precise links you want to renounce. By regularly completing this process, you can avoid any penalties and keep your content performing great.

Maximise Your Optimisation Potential

After you’ve completed this extensive SEO audit, you’ll know the exact areas of your website that require the most attention. With this step-by-step guide illuminating where you should start, it’s just a matter of implementing the latest optimisation techniques and boosting your SERP rankings.

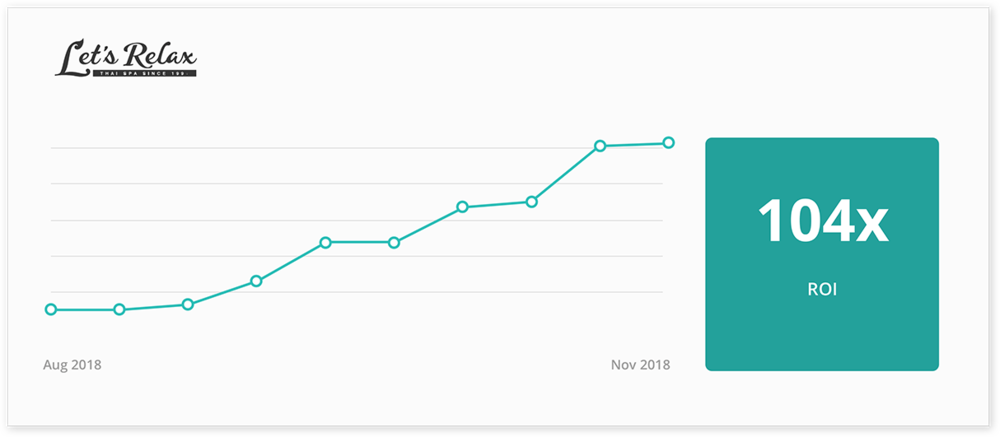

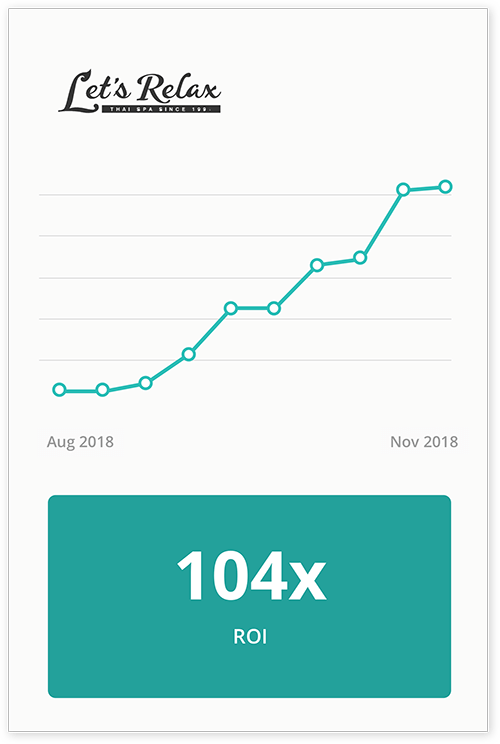

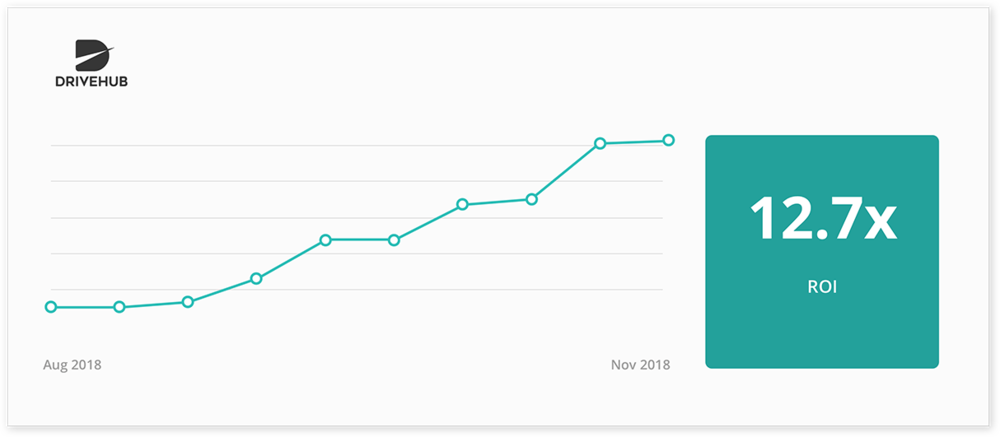

Backed by an incredibly solid foundation for your digital presence, you can then begin implementing a widespread digital marketing campaign that generates even more success. Primal’s reputation as a leading SEO agency ensures we can deliver outstanding results for your business.

Contact us to find out how we can conduct a free site audit before developing an effective digital marketing campaign that outshines your competition.